AI companies are currently engaged in a massive arms race over "Context Windows." We are seeing models boasting the ability to process hundreds of thousands, even millions of tokens. The implicit promise is that the AI will simply remember everything you tell it.

That promise is a myth enabling our worst work habits. Honestly, who even guaranteed that in the first place?

The Mirror of Organizational Amnesia

It's easy to blame the AI for this, but the truth is much harsher: the AI is simply acting as a mirror to our own sloppy knowledge management.

Long before AI arrived, human teams suffered from the exact same problem. How often have you sat in a meeting where people talk in circles, only to have the exact same meeting a week later because no one crystallized the outcome into a durable artifact? People walk away from verbal syncs with entirely different "pictures" in their heads because building a shared mental model is hard work.

Without a common "picture" solidified into an artifact, teams suffer from Organizational Amnesia. We are now applying that same lazy approach to AI. We treat the chat interface as a dumping ground for stream-of-consciousness thought, "slopping around" with ideas and assuming the AI will magically sort it out.

Why would we throw everything we learned overboard only because some AI is in the game now? Suddenly humans think that "slopping around" is finally a fruitful option. It isn't.

Stream vs. State: The "Sloppy" Tax

A chat interface is a stream: it is transient, noisy, and chronological. Software engineering, however, relies on state: artifacts that are curated, structured, and authoritative.

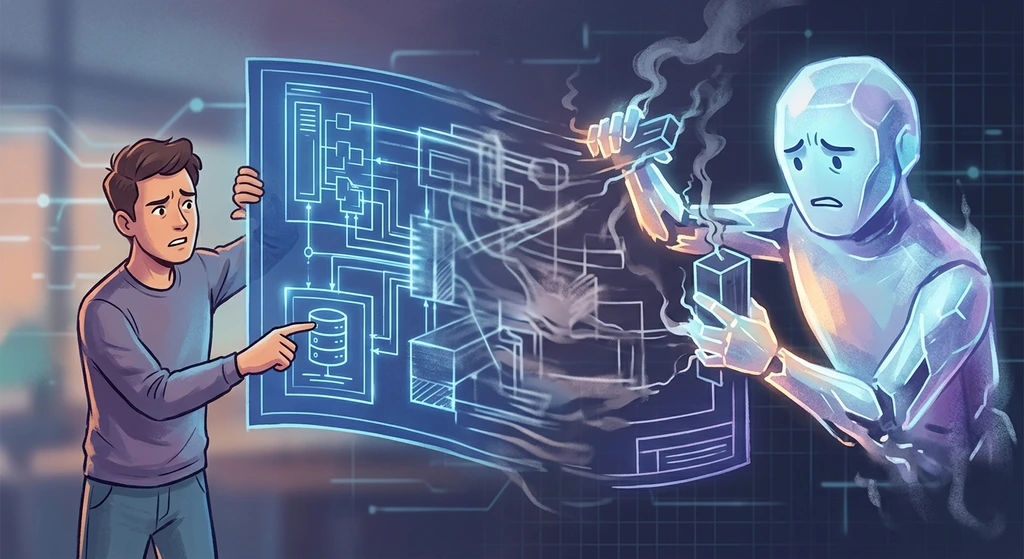

When we stop documenting decisions because "the AI knows," we aren't just being lazy ... we're paying a "Sloppy Tax." We end up spending more time "re-aligning" the AI in future sessions than it would have taken to write ten lines of Markdown. When human teams have different pictures, work slows down; when an AI has the wrong picture, it builds the wrong system at 100x speed.

The "What", The "How", and The "Why"

We need to be precise about what we are actually documenting. A specification (the what) defines expected external behavior, and often needs to be so precise that it becomes code itself ... like a test suite. As Gabriella Gonzalez recently noted, "a sufficiently detailed spec is code." This specification-code endures even if the underlying tech stack is entirely rewritten.

In contrast, solution-code (the how) describes the actual internal workings at a point-in-time. It is ephemeral and can be replaced by an AI at will.

But here is the critical gap in the agentic coding hype: neither the enduring specification-code nor the ephemeral solution-code captures the decisions, the constraints, or the rejected paths (the why).

The "Lost in the Middle" Phenomenon

Research into Large Language Models (LLMs) has repeatedly demonstrated a critical flaw in how they handle massive amounts of text: the "Lost in the Middle" effect. Models are excellent at recalling information at the very beginning of a prompt and at the very end. But the middle? It becomes a blurry, degraded mess.

But what fades first? In practice, the reasoning behind decisions degrades much faster than the decisions themselves. The AI might remember that your team decided to use PostgreSQL, but it will forget why ... for example, that you needed specific JSONB support for multi-tenancy. Stripped of the "why," the AI becomes a dangerous collaborator, technically complying with your stack choices while suggesting architectural patterns that violate your core constraints.

Solution-Code is Not The Only Dimension of Documentation

While the solution-code describes in its best way how the system is meant to work, it does not show the reasoning behind the decisions.

A common defense is that "the codebase is the documentation" But a codebase only shows outcomes. Code does not show what was rejected. It does not show the security constraints that forced a specific implementation. When we rely purely on code and chat history, we lose the intent.

As Rahul Garg points out, code captures outcomes, not reasoning. If you choose a library directly over a custom abstraction to save time, the code doesn't tell the AI (or your future self) that the abstraction was considered and rejected. Without that "why," the AI might re-propose the very thing you already moved past.

The Real Challenge

The challenge of working with modern AI isn't prompt engineering; it's organizational discipline.

We cannot abandon the practices of capturing decisions, writing Architecture Decision Records (ADRs), and maintaining state simply because an LLM is in the loop. The AI context window is not a substitute for institutional memory. Until we stop treating AI chats as infinite filing cabinets and start treating them as ephemeral scratchpads, we will remain trapped in a loop of our own making.

Sources

| Title | Link | Date | Type |

|---|---|---|---|

| Context Anchoring | Martin Fowler / Rahul Garg | March 17, 2026 | Article |

| A sufficiently detailed spec is code | Haskell for all / Gabriella Gonzalez | March 17, 2026 | Article |

| Lost in the Middle: How Language Models Use Long Contexts | arXiv | July 2023 | Research Paper |