The Illusion of Superior Judgment

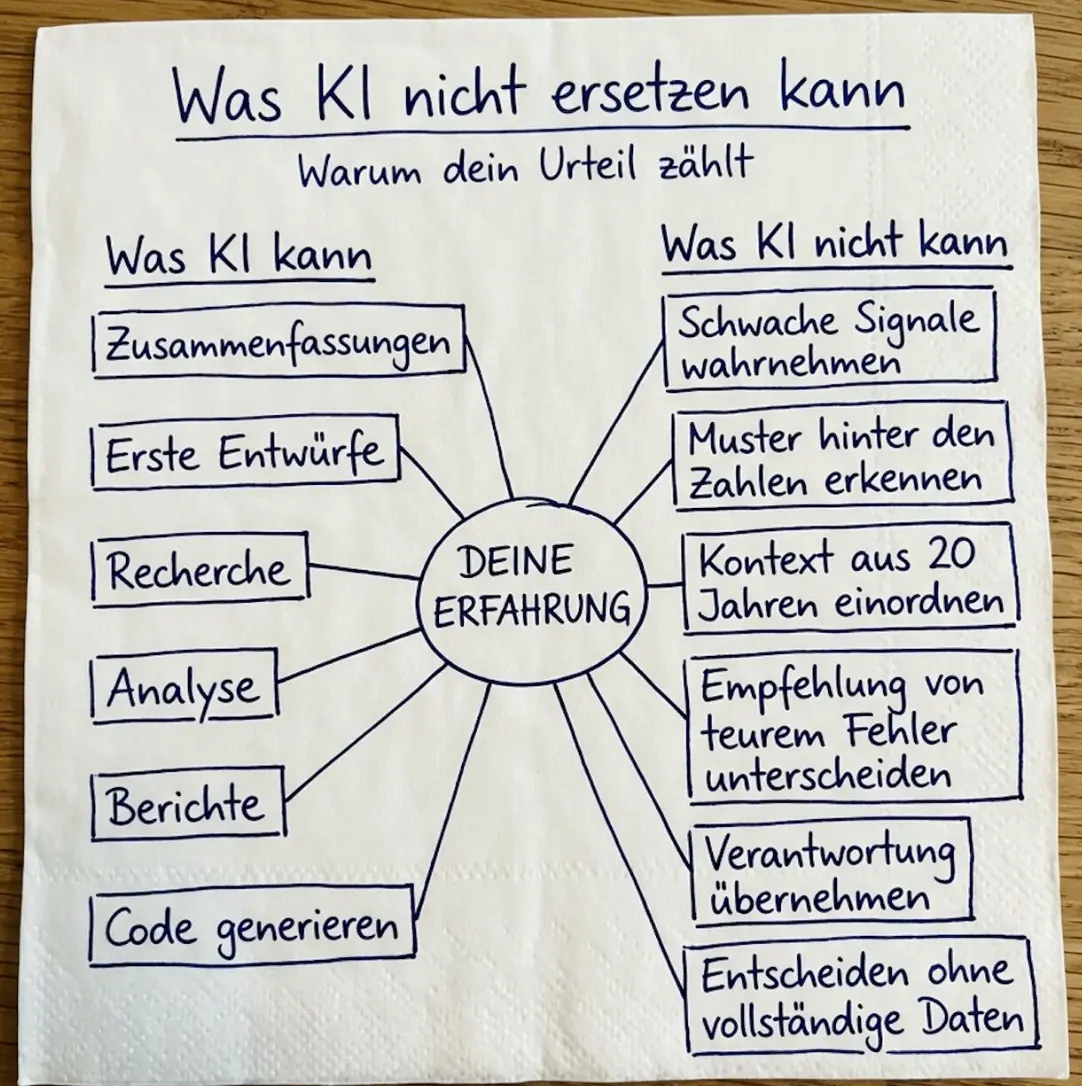

This post was triggered by a recent viral LinkedIn post featuring a handwritten napkin. The napkin draws a strict dividing line between what AI can do and what remains the exclusive domain of human experience.

It is a comforting narrative. It is also biologically and psychologically flawed.

IMPORTANT: This is a reflection on those claims. I am not arguing that AI is better at everything or that human experience is worthless. I am arguing that the specific claims about human judgment on the napkin are not supported by how our brains actually work.

What the napkin claims

The main points illustrated on the napkin:

- What AI can do: Summarizations, first drafts, research, analysis, reporting, code generation.

- What AI cannot do (but humans supposedly can): Perceive weak signals, recognize patterns behind numbers, put 20 years of context into perspective, distinguish recommendations from expensive mistakes, take responsibility, and make decisions with incomplete data.

- The Center: "Your Experience".

Even if you agree with the left side of the napkin, the right side is a dangerous trap. It romanticizes human cognition and ignores how our brains actually work.

The "Romanticized Human" temptation

A lot of AI adoption rhetoric implicitly assumes that humans are objective, rational decision-makers who act as the perfect counterbalance to AI's mechanical output. In practice, this is where things start to go wrong.

If we assume human "experience" is always an accurate compass, we overlook the fact that the human brain did not evolve to be an objective truth-seeking machine.

As evolutionary biologist Robert Trivers demonstrated, our brains evolved for social survival. We are wired for reciprocal altruism, status, and self-preservation—which heavily distorts how we process information.

The illusion of human judgment

When we map the claims on the napkin against cognitive psychology and evolutionary biology, the human track record looks surprisingly grim:

- Perceiving weak signals & patterns: This is actually a core weakness of the human brain. We suffer from Apophenia (seeing patterns where none exist) and Confirmation Bias (ignoring signals that contradict our beliefs). AI is objectively vastly superior at detecting statistical anomalies in noise.

- 20 years of context: Human memory is not a hard drive; it is a reconstructive process. We suffer from Hindsight Bias and the Availability Heuristic. Often, "20 years of experience" is just one year of experience repeated 20 times, heavily distorted by the most emotional or recent events.

- Avoiding expensive mistakes: Humans are notoriously bad at cutting their losses due to the Sunk Cost Fallacy and Escalation of Commitment. We double down on bad decisions to protect our ego.

- Deciding with incomplete data: We call it "gut feeling." Psychologists call it the Anchoring Effect and Overconfidence. We routinely fill data gaps with irrational assumptions and feel completely certain doing so.

As Trivers pointed out, the ultimate irony of human cognition is self-deception. We literally hide the truth from our conscious minds so that we can more convincingly lie to others (or protect our social standing). We are not objective evaluators of context; we are highly motivated actors.

What the real dividing line is

If the napkin is wrong, what is the actual difference?

AI is an objective probability engine. It calculates the most likely correct output based on data. It has no ego, no social standing to lose, and no biological imperative to survive.

The human is a meaning and risk engine. We understand the real-world consequences of an action. The true human USP is not that we make better decisions without data, but that we have skin in the game. When a human makes a bad decision, they can be fired, sued, or socially ostracized. AI cannot take responsibility because it cannot suffer consequences.

Challenges and countermeasures

If you want to integrate AI effectively into a team, the key is to stop treating humans as infallible oracles and start treating AI and humans as complementary systems that check each other's blind spots.

Challenge: Relying on human "gut feeling" to overrule AI data. Avoid it: Use AI as an active bias-checker. Practical example: Before finalizing a major architectural or product decision based on "experience," prompt the AI to act as a "Red Team." Ask it: What cognitive biases might be influencing this decision? What data contradicts our current plan?

Challenge: Expecting AI to take responsibility for "the right choice." Avoid it: Explicitly separate generation from accountability. Practical example: AI generates the options and predicts the statistical outcomes. A designated human (who holds the risk and accountability) signs off on the consequence of that choice.

Challenge: Overestimating the value of "past context." Avoid it: Externalize the context. Practical example: Do not let 20 years of context live solely in a senior engineer's head (where it is subject to memory decay and bias). Force the team to document the why behind past decisions so AI can ingest it and reference it objectively.

Closing

AI is changing the speed of production, but it exposes the flaws in human judgment.

The fastest way to lose effectiveness is to assume human experience is perfectly objective. The fastest way to gain effectiveness is to use AI to counter our evolutionary biases, while relying on humans for what they do best: carrying the weight of the consequences.

Sources and related concepts

| Topic | Source | Why it matters here |

|---|---|---|

| Heuristics, bias, overconfidence | Daniel Kahneman, Thinking, Fast and Slow | Explains why confidence is not the same as judgment quality. |

| Judgment under uncertainty | Amos Tversky and Daniel Kahneman, Judgment under Uncertainty: Heuristics and Biases | Foundational paper on systematic decision errors. |

| Inattentional blindness | Christopher Chabris and Daniel Simons, The Invisible Gorilla | Shows how obvious signals can disappear under attention constraints. |

| Psychological safety | Amy Edmondson, Psychological Safety and Learning Behavior in Work Teams | Explains why people may hide risk signals in unsafe environments. |

| Groupthink | Irving Janis, Victims of Groupthink | Explains how teams can suppress dissent and converge on poor decisions. |

| Escalation of commitment | Barry Staw, Knee-deep in the big muddy | Explains why people continue failing courses of action. |

| Self-deception | Robert Trivers, Deceit and Self-Deception | Gives an evolutionary lens on why humans may distort reality in self-serving ways. |